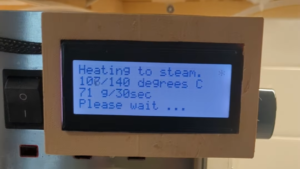

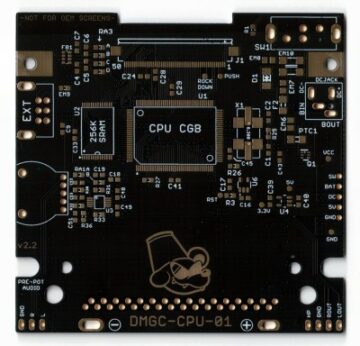

Essentially, it uses a Raspberry Pi and a Respeaker four-mic array to listen to conversations in the room. It listens and records 15-20 seconds of audio, and sends that to the OpenWhisper API to generate a transcript.

Essentially, it uses a Raspberry Pi and a Respeaker four-mic array to listen to conversations in the room. It listens and records 15-20 seconds of audio, and sends that to the OpenWhisper API to generate a transcript.This repeats until five minutes of audio is collected, then the entire transcript is sent through GPT-4 to extract an image prompt from a single topic in the conversation. Then, that prompt is shipped off to Stable Diffusion to get an image to be displayed on the screen. As you can imagine, the images generated run the gamut from really weird to really awesome.

The natural lulls in conversation presented a bit of a problem in that the transcription was still generating during silences, presumably because of ambient noise. The answer was in voice activity detection software that gives a probability that a voice is present.

Naturally, people were curious about the prompts for the images, so [TheMorehavoc] made a little gallery sign with a MagTag that uses Adafruit.io as the MQTT broker. Build video is up after the break, and you can check out the images here (warning, some are NSFW).

- SEO Powered Content & PR Distribution. Get Amplified Today.

- PlatoData.Network Vertical Generative Ai. Empower Yourself. Access Here.

- PlatoAiStream. Web3 Intelligence. Knowledge Amplified. Access Here.

- PlatoESG. Carbon, CleanTech, Energy, Environment, Solar, Waste Management. Access Here.

- PlatoHealth. Biotech and Clinical Trials Intelligence. Access Here.

- Source: https://hackaday.com/2023/09/22/whisperframe-depicts-the-art-of-conversation/

- :is

- $UP

- 1

- 225

- 400

- a

- About

- activity

- After

- Ambient

- an

- and

- answer

- api

- ARE

- Array

- Art

- AS

- audio

- BE

- because

- Bit

- Break

- broker

- build

- CAN

- check

- content

- Conversation

- conversations

- curious

- Detection

- Diffusion

- displayed

- during

- embedded

- Entire

- extract

- five

- For

- from

- Gallery

- generate

- generated

- generating

- get

- gives

- HTTPS

- image

- images

- imagine

- in

- IT

- listens

- little

- made

- max-width

- minutes

- Natural

- Noise

- NSFW

- of

- off

- on

- out

- People

- plato

- Plato Data Intelligence

- PlatoData

- present

- presented

- probability

- Problem

- Raspberry

- Raspberry Pi

- really

- records

- Room

- Run

- Screen

- seconds

- sends

- sent

- shipped

- sign

- single

- So

- Software

- some

- stable

- Still

- that

- The

- then

- Through

- to

- topic

- Transcript

- true

- until

- uses

- Video

- Voice

- warning

- was

- were

- with

- you

- youtube

- zephyrnet